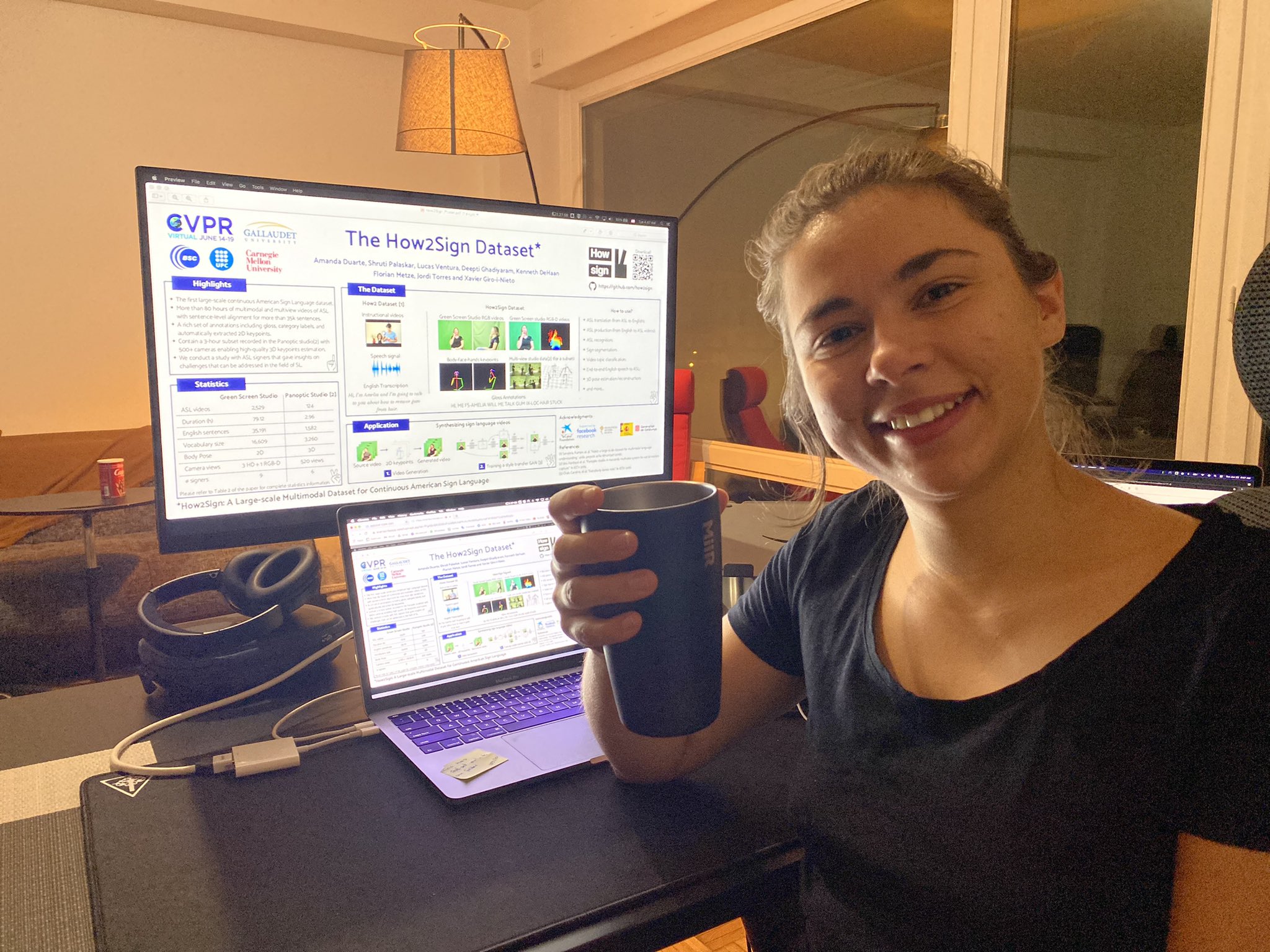

Abstract

Sign Language is the primary means of communication for the majority of the Deaf community. One of the factors that has hindered the progress in the areas of automatic sign language recognition, generation, and translation is the absence of large annotated datasets, especially continuous sign language datasets, i.e. datasets that are annotated and segmented at the sentence or utterance level. Towards this end, in this work we introduce How2Sign, a work-in-progress dataset collection. How2Sign consists of a parallel corpus of 80 hours of sign language videos (collected with multi-view RGB and depth sensor data) with corresponding speech transcriptions and gloss annotations. In addition, a three-hour subset was further recorded in a geodesic dome setup using hundreds of cameras and sensors, which enables detailed 3D reconstruction and pose estimation and paves the way for vision systems to understand the 3D geometry of sign language.

- Project page

- Paper on CVPR 2021 proceedings and arXiv

- Poster

- CVPR 2021 (acceptance rate: 23.6%)

- CVPR 2021 Women in Computer Vision Workshop

- ECCV 2020 Workshop on Sign Language Recognition, Translation and Production (SLRTP)

- Tweet in ECCV 2020: @DocXavi, @amandacduarte

- Tweets in CVPR 2021: @mandacduarte, @DocXavi

- Blog post by Facebook AI

- Press release: TV3, BSC.