USEFUL Dataset

| Resource Type | Date |

|---|---|

| Dataset | 2026-03-26 |

Description

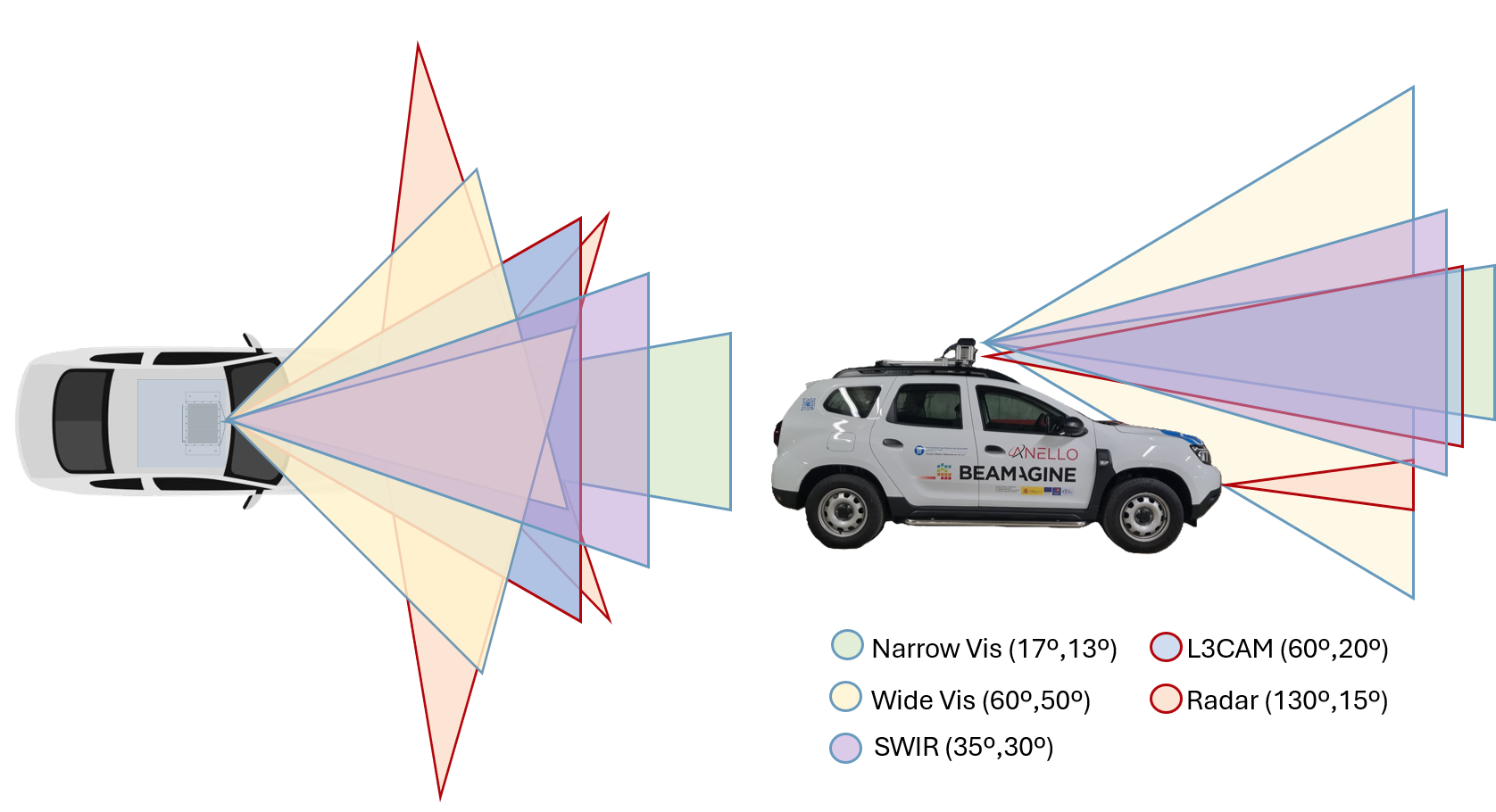

USEFUL is a multimodal autonomous driving perception dataset specifically designed to advance research in adverse visibility conditions (nighttime, glare, fog, or rain), where conventional passive imagery is insufficient.

The dataset includes 10 synchronized sensor streams — LiDAR, 2x Radar, 3x RGB cameras, Thermal (LWIR), SWIR, and Polarimetric — along with precise 3D and bounding box annotations and ego-pose data (GPS/INS).

The dataset features a development kit with a Python API to facilitate the loading, visualization, and export of the data.

The dataset is a result of an ongoing collaboration in the framework of the USEFUL project with A. Subirana, P. García-Gómez, E. Bernal Pérez, , and all the team from CD6-UPC / Beamagine.

mUltimodal Sensing and pErception For autonomoUs vehicLes

Dataset:

G. DeMas-Giménez, A. Subirana, P. García-Gómez, E. Bernal, J. R. Casas, and S. Royo,

“USEFUL dataset: mUltimodal Sensing and pErception For autonomoUs vehicLes.”

Hugging Face, 2026

DOI: 10.57967/hf/8147

Devkit:

The USEFUL devkit provides a Python API to load, query, visualize, and export all dataset content.

GitHub: https://github.com/GDMG99/useful-devkit

People involved

| Josep R. Casas | Associate Professor |